We conducted a survey of academic psychologists about their views on the state of the field, including their opinions on the severity of the replication crisis, whether the field has improved in recent years, and what reforms to research practices would be useful.

After emailing the survey to more than 2,500 academic psychologists and promoting it on relevant listservs, our newsletters, and social media, we received 87 fully completed surveys and another 123 that answered at least some of the substantive questions we asked. These 210 respondents indicated that they were all either experts or experts-in-training in psychology or a related field. There were additional participants who did not meet our screening criteria because they are not experts or experts in training in relevant fields, so their data were excluded from all analyses.

| Question: Are you an expert in psychology? | Number of included participants | % of included participants |

|---|---|---|

| I am an expert in psychology or a related field (e.g., I have a PhD, am a practitioner, or am a professor) | 136 | 64.76% |

| I am an expert in training or have a master’s degree (e.g., I am currently doing my master’s or PhD or already have a master’s degree) | 74 | 35.24% |

| I am not an expert or expert in training. | Excluded From Data Analysis | |

| Total Participants: | 210 | 100% |

While we attempted to reach a wide range of academic psychologists without biasing the sample in any particular direction, our sample of respondents is, of course, not going to be perfectly representative of the field. For example, we can’t rule out the possibility that those who chose to respond were more likely to have certain opinions than a perfectly random sample of academic psychologists. For more information about the participants and to access the anonymized data from the study, see the appendix.

Here’s what we learned about how psychologists think the field is doing:

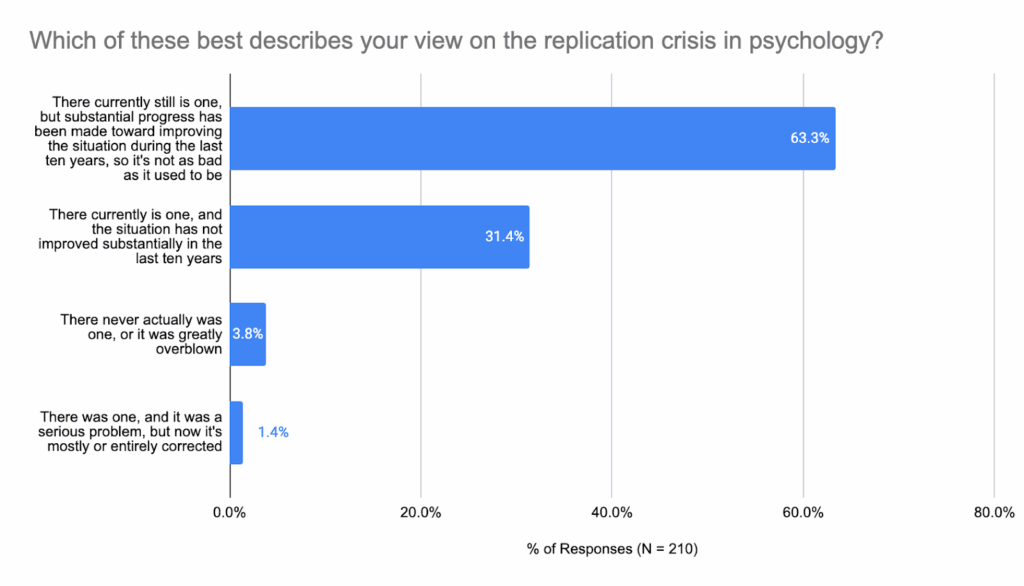

1. They believe the replication crisis is real and still happening, but that meaningful progress has been made

Nearly two-thirds of the participants in our study believed that the replication crisis was a real, serious issue, but that substantial progress has been made to address it. Nearly one-third believed it was a serious issue, but that little progress has been made. Only 5% of participants believed it was either never an issue, or that it was an issue that had been completely solved.

It’s striking to see how strong a majority there is for the belief that there still is a replication crisis ongoing, though most with that view also believe that substantial progress has been made.

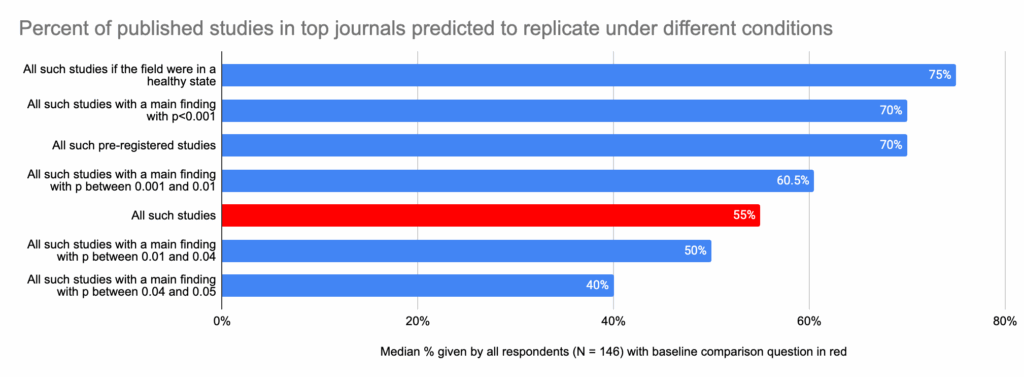

2. Psychologists predict that 55% of new studies in top journals would replicate (median estimate). The median prediction increases to 75% if the field were healthy.

In order to get a more quantitative assessment of how academic psychologists believed the field was doing with respect to replicability, we asked them to predict how likely a study in a top journal would be to replicate under different conditions. The question we asked was:

What percent of studies published in the last 12 months in what you consider to be one of the top 5 psychology journals do you think would replicate in a high powered (i.e., 99% power) replication that is completely faithful to the original study design?

The first version of the question established participants’ baseline prediction when considering “all such studies.” We then modified the question by asking about more specific circumstances to see how that changed psychologists’ predictions about replicability (with the conditions of the baseline question still applying, such as just considering recent published papers in the top 5 psychology journals). The list of circumstances we asked about in order was:

- All such studies [baseline question]

- All such pre-registered studies

- All such studies with a main finding with p<0.001

- All such studies with a main finding with p between 0.001 and 0.01

- All such studies with a main finding with p between 0.01 and 0.04

- All such studies with a main finding with p between 0.04 and 0.05

- All such studies if the field were in a healthy state

The overall question and each of the 7 circumstances were displayed on screen at the same time, and participants responded to each one using a slider ranging from 0% to 100% in 1% increments. The chart below shows the median percentage of studies that academic psychologists predicted would replicate under each of the circumstances, with the red bar showing the median replication prediction for the baseline circumstance “All such studies.”

The median estimated replication rate for the studies “if the field were in a healthy state” was 75%, 20 percentage points higher than the 55% median for the current replicability rate. Studies with p < 0.01 and those that are pre-registered were predicted to be more likely to replicate overall (than the baseline), while those with p-values between 0.01 and 0.05 being estimated to be less likely to replicate. Interestingly, respondents see pre-registered studies and also studies where a main finding has p<0.001 as being fairly close to as likely to replicate as if the field were in a healthy state (70% and 70% compared to 75%).

This suggests that academic psychologists believe that the field has some work left to do, but that they believe that pre-registration is an effective tool for improving replicability, and that very small p-values are a meaningful indicator of replicability.

It is worth noting that the median values for the predicted replication rate were a little bit higher than the mean values for the top three questions. The means and medians were consistent with each other for the other four questions. From the distributions of responses it appeared that there were low outliers that were skewing the mean values for the top three questions. For that reason, we decided to focus on the median values in this discussion. The chart below displays the mean and median values for comparison.

| What Percent of Published Studies in top journals would replicate? (N = 146) | Mean % | Median % |

|---|---|---|

| All such studies if the field were in a healthy state | 68.2% | 75% |

| All such studies with a main finding with p<0.001 | 65.0% | 70% |

| All such pre-registered studies | 64.8% | 70% |

| All such studies with a main finding with p between 0.001 and 0.01 | 59.2% | 60.5% |

| All such studies | 55.0% | 55% |

| All such studies with a main finding with p between 0.01 and 0.04 | 49.4% | 50% |

| All such studies with a main finding with p between 0.04 and 0.05 | 41.8% | 40% |

Interestingly, in an analysis we conducted on an unrelated data set, where we examined 325 studies that had undergone replication, when the original study’s p-value was less than or equal to 0.01, about 72% of the papers replicated (very slightly higher than the 60.5% to 70% range of estimates in this study). But when p was larger than 0.01, only 48% replicated (within the 40% to 50% range of estimates in this study). However, exact numbers are likely to vary depending on the data set used, as it likely varies with field and topic.

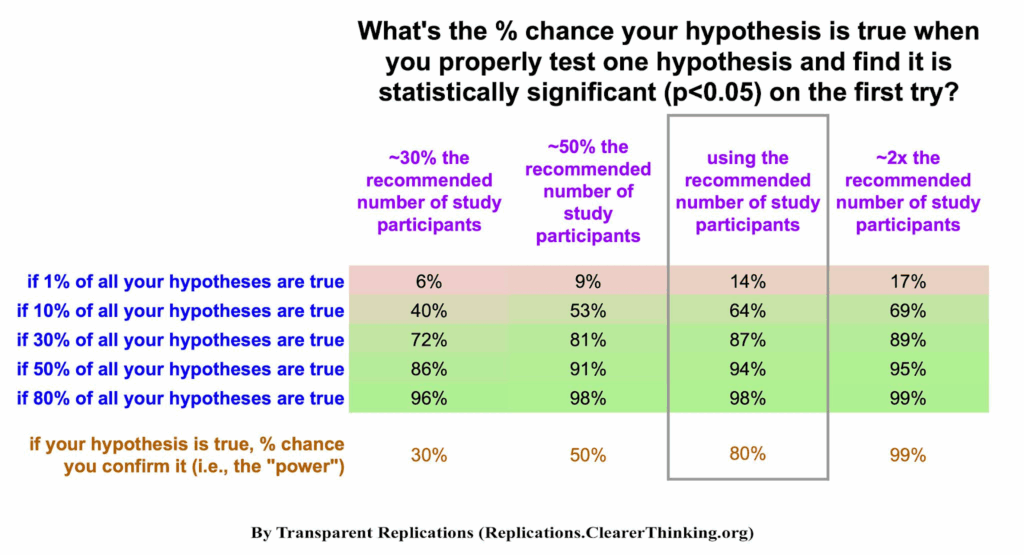

We were somewhat surprised that the median replicability given “if the field were in a healthy state” is only about 75% – our team anticipated people would say that a healthy replicability would be more like 80%-90%. To determine these numbers, consider our chart below. If studies are designed to have a reasonable level of statistical power (e.g., 80%), and there is a 50% prior chance of a hypothesis that’s studied being true, and p<0.05 is achieved on the first try, 94% of such results should be replicable.

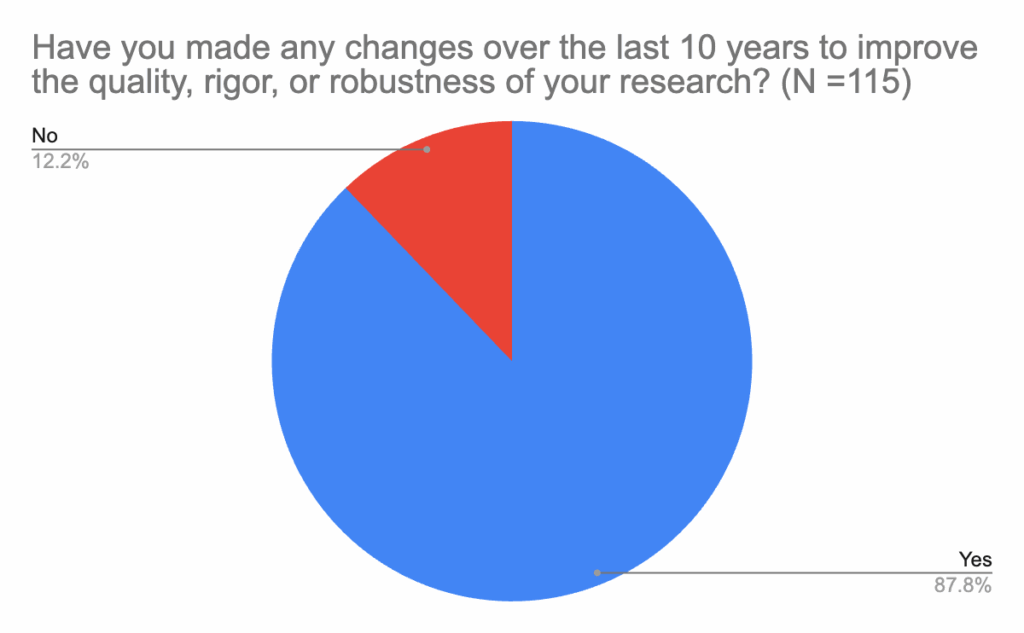

3. Researchers report that they have changed their own research practices, and indicate that they estimate 5 to 7 percentage points higher replicability for their future papers compared to their past papers.

Consistent with the belief that the field is improving, the vast majority of academic psychologists reported that they had made changes to their research practices in the last 10 years to improve the quality, rigor, or robustness of their research. Of the 139 people who responded to this question, 115 reported that they had conducted research in the last 10 years. In addition to these participants, there were 24 people who said they had not conducted research in the last 10 years, who aren’t included in the chart below. Among respondents who had conducted research in the last 10 years, 88% said they had made changes to improve their research during that time.

We asked an open-ended follow-up question to those who reported that they had made changes to their research, asking, “What are the most important changes you have made over the last 10 years to improve the quality, rigor, or robustness of your research?” The most common change researchers reported making was pre-registering their studies. Participants also mentioned publicly sharing data, materials, and analysis code; as well as increasing sample sizes and conducting power analyses.

We asked a checkbox follow-up question to those who indicated that they had done research in the past 10 years, but had not made changes, about why they hadn’t made changes. The most common response was that they were already using best practices (9 out of 14 people).

In addition to asking psychologists about changes to their research practices, we also asked them to assess the replicability of their own past and planned future work. Below are the mean, median and modal percentages of their own work that academic psychologists expect would replicate in a high-powered replication.

| Question | Mean | Median | Mode |

|---|---|---|---|

| What percentage of your own (already published) empirical psychology studies do you think would replicate in high-powered (i.e., 99% power) replications that are completely faithful to your original study designs? (N = 115) | 68.1% | 72.0% | 75.0% |

| Considering future empirical psychology studies you may one day run, what percentage of them do you think would replicate in high-powered (i.e., 99% power) replications that are completely faithful to your original study designs? (N = 115) | 74.7% | 79.0% | 80.0% |

| Change in estimated replicability (future study replicability minus past study replicability) | 6.6% | 7.0% | 5.0% |

Comparing the mean, median, and modal responses, psychologists assigned a higher likelihood of replication to their planned future work than to their past work by 5 to 7 percentage points.

A paired-samples t-test shows that the higher mean predicted replicability for psychologists’ planned future studies compared to their predictions about their past studies is modest, but statistically significant (p < 0.001), suggesting that academic psychologists are slightly more optimistic about the replicability of their future work than their past work.

Participants, on average, predicted that their own past work would replicate at a higher rate than their predicted replication rate for top journals in the field overall, and they predicted that their future work’s replicability rate would exceed the replicability rate for top journals if the field were in a healthy state. Perhaps it’s not surprising that people perceive their own work to be above average, but it does suggest that the “healthy state” prediction participants made may be a little low or their assessment of their own future work may be excessively high.

Since people are likely to be overly positive in their assessments of their own work, we don’t think the baseline replicability assessments people provide for their own work are especially useful for understanding the state of the field; however, we do think comparisons between participants’ assessments of their own past work and their own future work may provide useful insights. For example, if participants thought their future work would replicate at the same rate as their past work, it would suggest that they planned to use the same research practices in future work as they used in the past. Researchers saying that they expect their future work would be more likely to replicate than their past work suggests that they are changing their research practices in ways they believe will improve the replicability of their future work compared to their past work.

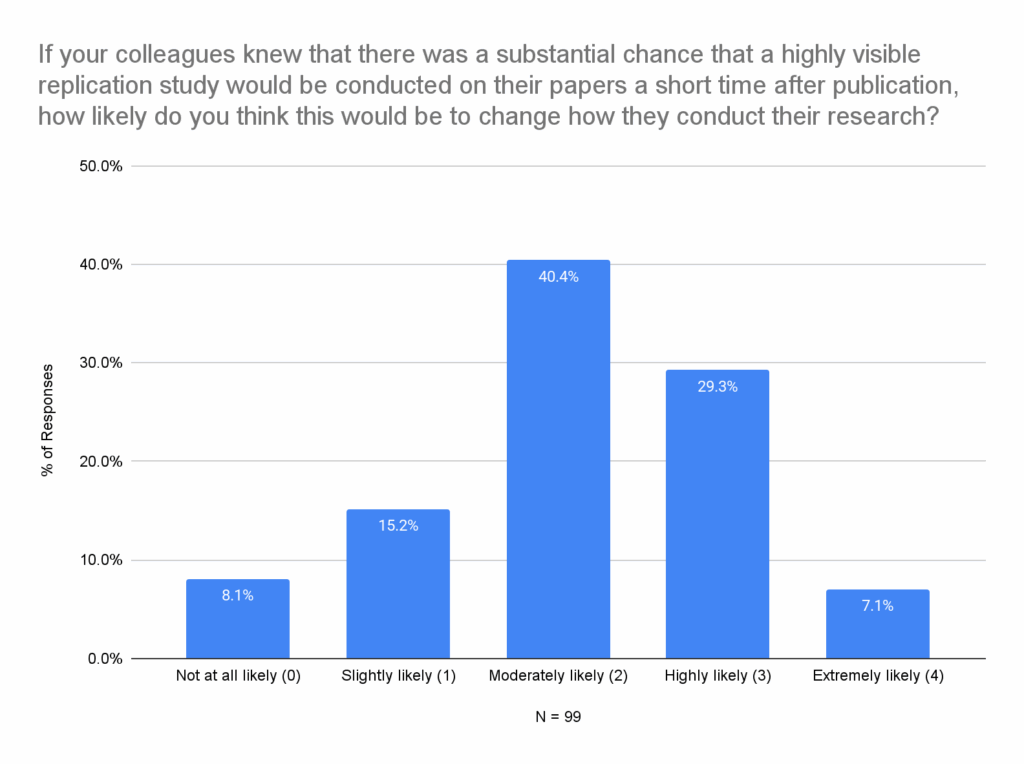

4. Academic psychologists believed that, if there were a substantial likelihood of a visible replication soon after publication, that would change colleagues’ research practices

We asked participants to consider how their colleagues’ research practices might change if there was a substantial chance of a highly visible replication of their paper being performed shortly after publication. The bulk of respondents chose either “moderately likely” (40%) or “highly likely (29%).

When given a list of 12 possible research practices that people might change, with the ability to check any number of them that they thought their colleagues might be more likely to do if replication was more common, the most popular answers were larger sample sizes, power analysis, not submitting findings researchers lacked confidence in, and pre-registration. The full list is in the table below:

| Research Practice | % of participants who checked (N=96) | Number of participants who checked |

|---|---|---|

| Using larger sample sizes | 67.7% | 65 |

| Using a power analysis to determine an adequate sample size for the study | 65.6% | 63 |

| Not submitting findings that they aren’t confident will replicate | 65.6% | 63 |

| Pre-registering study design and planned analyses | 61.5% | 59 |

| Clearly reporting effect sizes for key findings | 55.2% | 53 |

| Making study materials publicly available | 55.2% | 53 |

| Making data publicly available | 53.1% | 51 |

| Making analysis code publicly available | 50.0% | 48 |

| Running confirmatory studies to check the reliability of results prior to submitting | 47.9% | 46 |

| Including multiple studies in the paper testing the same hypotheses | 40.6% | 39 |

| Reporting all of the variables that were collected | 39.6% | 38 |

| Including the “Simplest Valid Analysis” | 28.1% | 27 |

Key Takeaways

The academic psychologists who responded to our survey still see the replication crisis as an ongoing, serious problem; however, they also see improvement over the last decade. This is most clearly reflected in nearly two-thirds of psychologists selecting the response, when asked about the replication crisis in psychology, “There currently still is one, but substantial progress has been made toward improving the situation during the last ten years, so it’s not as bad as it used to be.”

We also see this belief about the state of the field reflected in the psychologists’ answers to other questions in our survey. Academic psychologists predicted that, at present, only 55% of studies published in top five psychology journals would replicate, whereas the median prediction if the field were in a healthy state was that 75% would replicate. There is a 20 percentage point gap between where psychologists believe the field is today, and where they believe it should be in terms of replicability, which serves as additional evidence that academic psychologists see the replication crisis as an ongoing issue.

There is also further evidence in this survey that academic psychologists believe that progress has been made in addressing the replication crisis. The vast majority (88%) of participants who conducted research over the last decade reported that they have made quality, rigor, or robustness improvements to their own research practices. Experts also predicted a 5 to 7 percentage point improvement in the replicability rate of their own planned future studies compared to their own previously published studies, suggesting that participants believe that their future research practices will be more robust than those used in some of their previously published work.

Additionally, more than three-quarters of academic psychologists surveyed reported that they believe their colleagues would be at least moderately likely to make changes to their research practices if there was a substantial chance of a highly visible replication attempt shortly after publication. This suggests that academic psychologists believe that their colleagues respond to incentives when making research decisions, and that sufficiently large changes in the incentives around the use of best practices may have a good chance of increasing adoption of these practices.

How do psychologists’ perceptions of the field compare to how the field is actually doing? We ran replications on 12 randomly-selected, recently published, studies from top journals, and what we found diverges in a few unexpected ways from the predictions of experts in the field. Part two of this series explores those results.

This article is the first in a four-part series. For more of what we learned, check out Part 2 on our first dozen replication attempts.

Appendix: Demographics of Survey Participants and Anonymized Data

Demographics

Education in Psychology or a related field

Of the 210 participants who considered themselves experts or experts in training, participants listed the following education levels:

| Question: What is the highest position or degree you’ve obtained in psychology, behavioral science or other related fields? | Number of Participants | % of Participants |

|---|---|---|

| Tenured professor | 50 | 23.81% |

| Professor but not tenured | 34 | 16.19% |

| Completed PhD but have never been a professor | 31 | 14.76% |

| Started or have a PhD in progress but haven’t finished it | 31 | 14.76% |

| Completed a Masters degree but have not started a PhD | 34 | 16.19% |

| Started a Masters degree but haven’t finished it | 17 | 8.10% |

| Completed an undergraduate degree but have not started a higher degree | 2 | 0.95% |

| Started an undergraduate degree but have not finished it | 4 | 1.90% |

| None of the above | 7 | 3.33% |

| Total: | 210 | 100.00% |

Note that a few of these participants may not seem to qualify as experts or experts in training on the basis of their answer to this question. We used participants’ self-identification for the main data analysis. The main data analysis excluded 63 people who participated in the survey, but indicated that they were not experts or experts in training in psychology or a related field.

Subfield

| Question: What field best describes your expertise? | Number of participants | % of participants |

|---|---|---|

| Social and Personality Psychology | 63 | 30.0% |

| Clinical, Health, and Forensic Psychology | 32 | 15.2% |

| Cognitive and Neuropsychology / Neuroscience | 27 | 12.9% |

| Developmental and Educational Psychology | 23 | 11.0% |

| Judgment and Decision Making | 21 | 10.0% |

| Industrial-Organizational Psychology / Management | 11 | 5.2% |

| Behavioral Economics | 4 | 1.9% |

| Other | 29 | 13.8% |

| Total: | 210 | 100.0% |

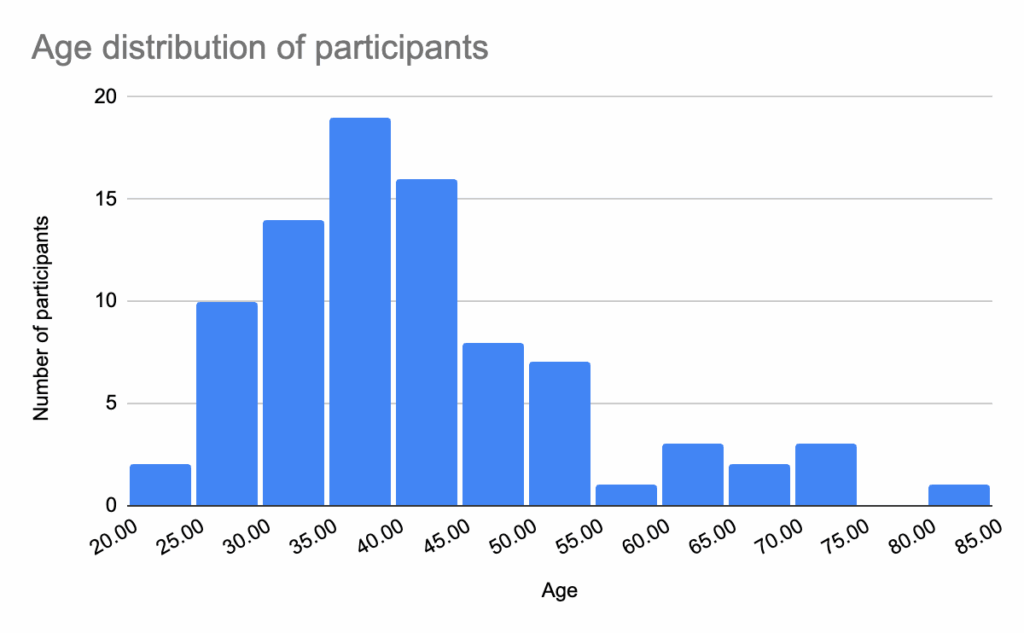

We asked a few more basic demographic questions at the end of the survey, so the responses below only include participants (N = 87) who made it all the way to the end of the study.

Age

| Participant Age | (N = 86) |

|---|---|

| Mean | 41.34 |

| Median | 39 |

| Mode | 42 |

Note that one participant’s age was excluded because it was reported as 10, outside of the reasonable range for the study.

Gender

| Question: Which gender do you identify most with? | Number of participants | % of participants |

|---|---|---|

| Male | 57 | 65.52% |

| Female | 27 | 31.03% |

| Other (fill in the blank) | 1 | 1.15% |

| Prefer not to say | 2 | 2.30% |

| Total: | 87 | 100.00% |

Ethnicity

Participants were asked “Which of these categories describe you? (Select all that apply).”

| Race, Ethnicity or Origin | Number of participants | % of participants |

|---|---|---|

| White, Caucasian or European | 71 | 81.61% |

| Latino, Hispanic or Spanish origin | 5 | 5.75% |

| More than one race/ethnicity*(these participants checked more than one box) | 4 | 4.60% |

| East Asian (e.g. Chinese, Japanese) | 3 | 3.45% |

| Some other race or ethnicity** / Prefer not to respond | 2 | 2.30% |

| American Indian or Alaska Native | 1 | 1.15% |

| Southeast Asian (e.g. Indonesian, Filipino) | 1 | 1.15% |

| Black, African or African Descent | 0 | 0.00% |

| Middle Eastern, Arab or North African | 0 | 0.00% |

| Pacific Islander or Native Hawaiian | 0 | 0.00% |

| South Asian (e.g. Indian, Pakistani) | 0 | 0.00% |

| Total | 87 | 100.00% |

** These two participants checked the box “Some other race or ethnicity” but indicated in the text field that they preferred not to respond or that the question was irrelevant

Anonymized Dataset and Open Ended Responses

Anonymized .csv dataset

This .csv file includes anonymized closed-ended survey responses for the 210 participants included in the main data analysis. Note that most demographic information is not included in the anonymized dataset file to prevent the identification of individual participants.

Anonymized open ended responses

This .pdf includes the open-ended responses to the survey from the 210 participants included in the main dataset. The order of these responses has been randomized so they do not correspond to the order of the anonymized dataset. These responses have been lightly redacted to remove specific examples and other comments that may have allowed the identification of participants.