Executive Summary

| Transparency | Replicability | Clarity |

|---|---|---|

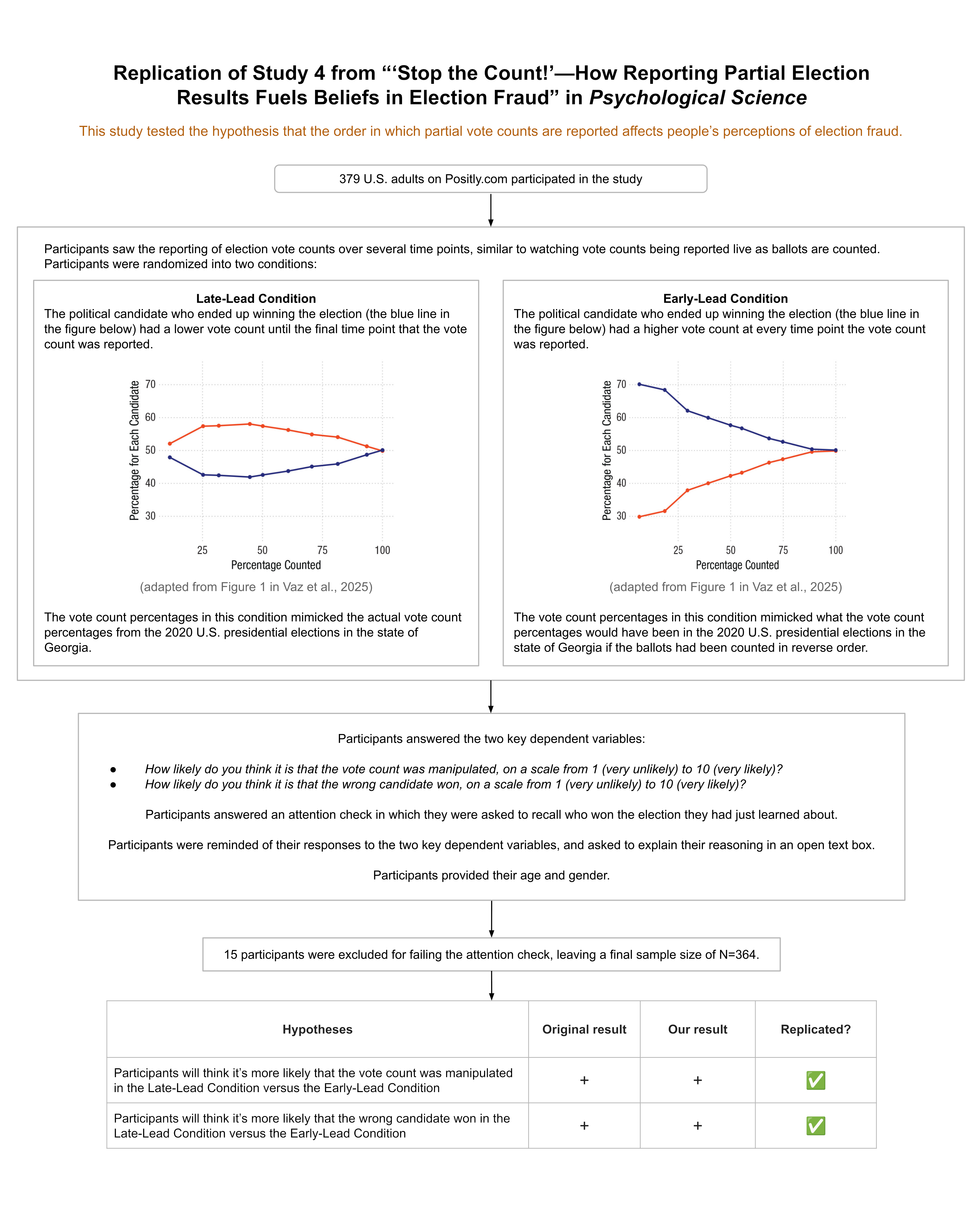

We ran a replication of Study 4 from this paper, which found that the order in which partial vote counts are reported during an election can affect people’s perceptions of election fraud.

During an election, partial vote totals are often reported as votes are counted across different municipalities. Inevitably, some municipalities are faster or slower to report electoral results given factors such as poll closing times or the volume of votes to be counted. This can cause candidates who ultimately lose the race to appear ahead at certain periods as vote counts are continuously updated.

The authors of this study hypothesized that people would be more likely to believe election fraud occurred when the electoral candidate who ultimately won took a late lead, rather than an early lead, during the continuous reporting of partial election returns. The authors hypothesized this given research on the Cumulative Redundancy Bias (CRB)—the finding that people harbor better impressions of competitors who were leading during a competition, regardless of the competition’s final outcome (see Summary of the methods for a more detailed definition).

The results from the original study confirmed the authors’ predictions: When the winning candidate took a late lead versus an early lead in the partial reported vote counts, participants were more likely to think that election fraud had occurred and that the wrong candidate had won.

Our replication found the same results.

The study received a transparency rating of 5 stars because its materials, cleaned data, and analysis code were publicly available, and it adhered to its preregistration. The paper received a replicability rating of 5 stars because all of its primary findings replicated. The study received a clarity rating of 5 stars because the claims were well-calibrated to the study design and statistical results.

Full Report

Study Diagram

Replication Conducted

We ran a replication of Study 4 from: Vaz, A., Ingendahl, M., Mata, A., & Alves, H. (2025). “Stop the Count!”—How Reporting Partial Election Results Fuels Beliefs in Election Fraud. Psychological Science, 36(8), 676-688. https://doi.org/10.1177/09567976251355594

How to cite this replication report: Transparent Replications by Clearer Thinking (2025). Report #14: Replication of a study from “‘Stop the Count!’—How Reporting Partial Election Results Fuels Beliefs in Election Fraud” (Psychological Science | Vaz et al 2025) https://replications.clearerthinking.org/2025psci36-8

Key Links

- Our Research Box for this replication report includes the pre-registration, study materials, de-identified data, and analysis files.

Overall Ratings

To what degree was the original study transparent, replicable, and clear?

| Transparency: how transparent was the original study? | Materials, analysis code, and cleaned data were publicly available, and raw data was provided upon request. The study was pre-registered and the preregistration was adhered to. |

| Replicability: to what extent were we able to replicate the findings of the original study? | All primary findings from the original study replicated. |

| Clarity: how unlikely is it that the study will be misinterpreted? | This study is explained accurately, the statistics used for the main analyses are straightforward and interpreted correctly, and the claims were well-calibrated to the study design and statistical results. |

Detailed Transparency Ratings

| Overall Transparency Rating: | |

| 1. Methods Transparency: | The materials were publicly available and were complete. |

| 2. Analysis Transparency: | The analysis code was publicly available and complete. |

| 3. Data availability: | The cleaned data were publicly available and complete. The raw data were provided upon request. |

| 4. Preregistration: | The study was preregistered and the preregistration was adhered to. |

Summary of Study and Results

Summary of the methods

During an election, partial vote counts are often reported as votes are being tallied up across different municipalities. If municipalities report their votes in different orders, the trajectory of vote counts could look radically different, even though the final tally is the same.

The original study (N=195) and our replication (N=364) examined whether the order in which partial vote counts are reported affect people’s perceptions of election fraud.

The original authors hypothesized that people would be more likely to attribute election fraud when the candidate who ultimately wins gains the lead towards the end of the vote count reporting period. For example, a candidate who was trailing for most of the night as partial vote counts rolled in, but then ended up gaining a late lead and winning the election, might be more likely to be accused of election fraud than a winning candidate who led the vote count the whole night.

The authors hypothesized this given their previous research on the Cumulative Redundancy Bias. The Cumulative Redundancy Bias is a cognitive bias that occurs when people observe the progression of a competition in a cumulative format (e.g., votes added to a running total). In such a cumulative format, any new observation already contains the data from previous standings, such that a rational individual should ignore previous standings and only rely on the end result in their judgment. However, people are nevertheless influenced by interim standings and judge a “leading” competitor more positively, even if the end result is the same. As the authors state, “The repeated observation of a competitor being ahead seems to leave a lasting impression on observers that is not entirely erased by the final result” (Vaz et al., 2025, p. 677).

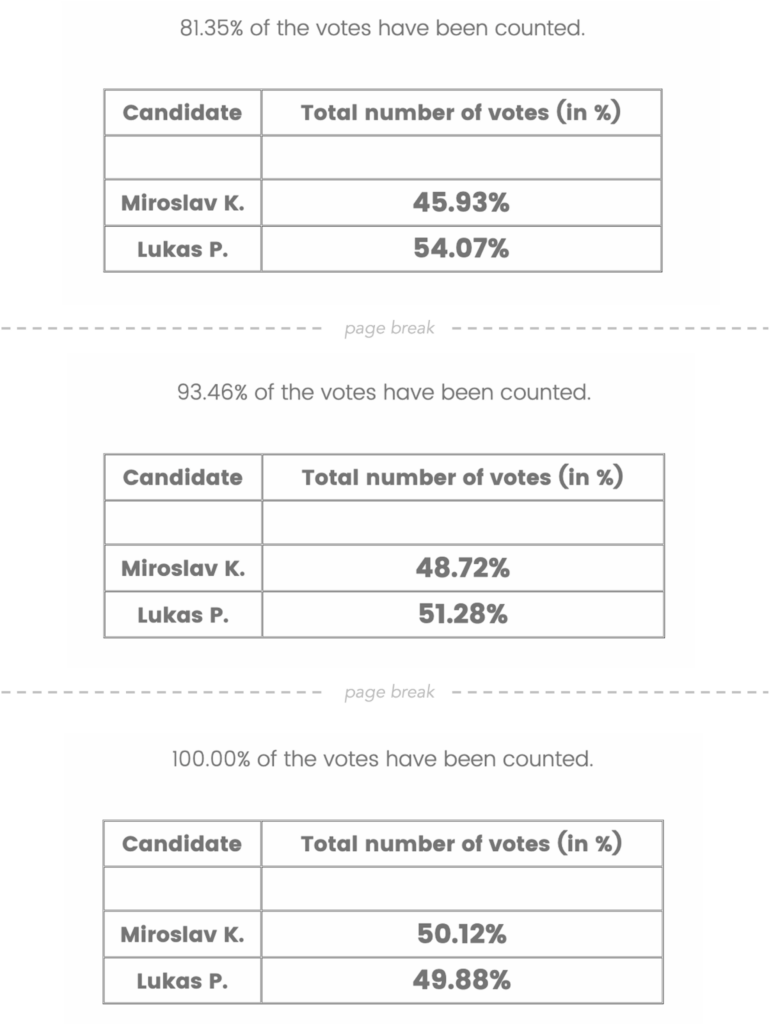

To test their hypothesis about attributions of election fraud, the authors showed participants the progression of partial vote counts of an alleged election in Eastern Europe. Participants saw the accumulated vote counts for two different candidates across 10 timepoints, where each timepoint corresponded to roughly 10% more of the vote coming in. For example, below are screenshots of the final three timepoints that some participants saw.

Importantly, however, there were two different patterns of results participants might see.

Participants in the Late Lead Condition saw accumulated vote counts such that the candidate who ultimately won was trailing at each of the first 9 timepoints before gaining the lead at the 10th and final timepoint.

Participants in the Early Lead Condition saw accumulated vote counts such that the candidate who ultimately won was leading during all 10 of the timepoints.

The final timepoint that participants saw, which presented 100% of the vote count, was the exact same in both conditions. The key difference between the conditions was that the candidate who ultimately won appeared to be losing up until the final timepoint in the Late Lead condition, but appeared to be winning the whole time in the Early Lead Condition.

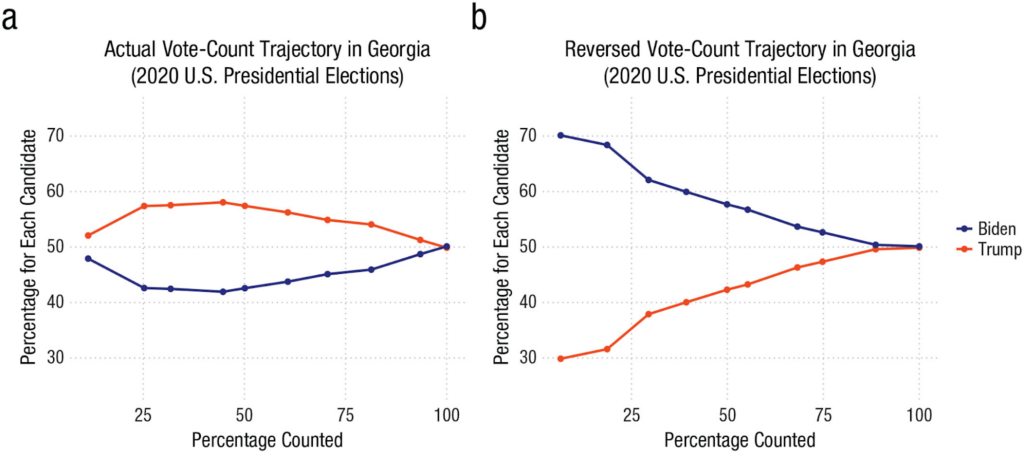

Even though participants thought they were seeing vote counts from an Eastern European election, the vote accumulations in both conditions were derived from the real election data for the state of Georgia during the 2020 U.S. presidential election. The accumulated vote counts shown in the Late Lead Condition reflected the actual order in which the vote count was reported during the election coverage. The accumulated vote counts shown in the Early Lead Condition presented roughly what the partial vote counts would have been if precincts had been reported in reverse order.

These two different vote count trajectories are displayed in a figure from the original paper, copied below:

After participants saw the vote counts at all 10 timepoints, they were told:

Shortly after the vote count was finished, rumours emerged that the vote count may have been rigged and that the wrong candidate won as a result. The people responsible for the vote count, however, denied the allegation.

Participants then answered the two primary questions of interest:

How likely do you think it is that the vote count was manipulated, on a scale from 1 (very unlikely) to 10 (very likely)?

How likely do you think it is that the wrong candidate won, on a scale from 1 (very unlikely) to 10 (very likely)?

On the next page, participants were asked to recall which of the candidates won the election:

You’re almost done.

Before you finish, we want to check if you remember who won the election.

* Lukas P.

* Miroslav K.

* Don’t Remember

This question served as an attention check; any participants who failed it were dropped from our analyses.

In the original study, participants then provided their age and gender to complete the study. In our replication, before participants provided their age and gender, we asked them one additional question:

Here’s how you answered two of the questions you were asked earlier:

Question: “How likely do you think it is that the vote count was manipulated, on a scale from 1 (very unlikely) to 10 (very likely)?”

Your response: {this displayed the participant’s response}

Question: “How likely do you think it is that the wrong candidate won, on a scale from 1 (very unlikely) to 10 (very likely)?”

Your response: {this displayed the participant’s response}

Can you briefly explain your reasoning?

We included this open-ended question so that we could assess whether the rationales participants provided were in line with the hypotheses put forth by the original paper.

Summary of the results

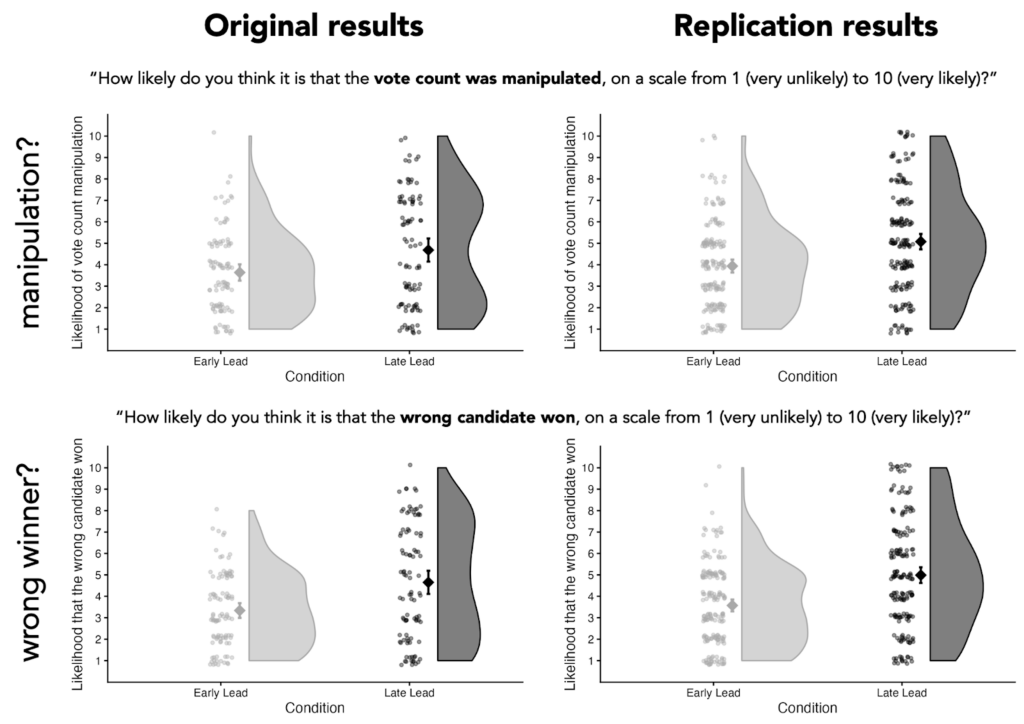

In the original study, participants in the Late Lead Condition thought it was more likely that the vote count was manipulated (p = .002) and more likely that the wrong candidate won (p < .001), on average.

Our replication found the same general pattern:

| Dependent variable | Mean difference between Late Lead and Early Lead conditions | Finding replicated? | |

| Findings from original study | Findings from our replication | ||

| “How likely do you think it is that the vote count was manipulated, on a scale from 1 (very unlikely) to 10 (very likely)?” | Mean diff: 1.04 t(170.66) = -3.14, p = .002 | Mean diff: 1.14 t(351.29) = -4.78, p < .001 | ✅ |

| “How likely do you think it is that the wrong candidate won, on a scale from 1 (very unlikely) to 10 (very likely)?” | Mean diff: 1.31 t(160.49) = -4.06, p < .001 | Mean diff: 1.41 t(335.45) = -6.05, p < .001 | ✅ |

Study and Results in Detail

This section provides details about participant recruitment, minor study design differences between the original study and the replication, differences in response distributions between the original study and the replication, and results from the open-ended question.

Additional details about the methods

Participant recruitment

We aimed to have a final sample size of 366, which would provide 90% power to detect an effect that was 75% of the size of the smaller of the two effect sizes reported in the original paper. We anticipated an exclusion rate of 3% based on that observed in the original study. As a result, we recruited 377 participants on Positly.com to complete the study.

In total, 379 participants completed the study (occasionally, a few more participants complete a study than the number recruited on the platform). After excluding any participants who failed the attention check (i.e., those who couldn’t recall which candidate won the election), we were left with a final sample size of N=364 (the sample size of the original study was N=195).

Minor design differences between the original study and the replication

We kept the study design nearly identical to that of the original study, but we made three tiny alterations. We verified our study plan, including our planned alterations, with the original authors before collecting data.

Alteration 1

In the original study, the order in which the candidate names were listed was randomized and which candidate was the winner was randomized, but the candidate who ended up winning was always listed on the top row of the table that displayed the partial election counts.

We felt that it would be better to counterbalance the order of the winner, rather than the order in which the names were listed. So we tweaked the display order such that the candidates names were always displayed in alphabetical order (Lukas first, Miroslav second), but which candidate wins—and thus whether the winner is listed first or second—was randomized.

Alteration 2

In the original study, all three response options for the attention check (Lukas P.; Miroslav K.; Don’t remember) were displayed in a random order. We thought it looked strange when it was randomized such that “Don’t remember” was the middle option. We changed the randomization such that “Don’t remember” was always the third option and “Lukas P.” and “Miroslav K.” were randomly assigned to be the first and second option.

Alteration 3

As mentioned earlier, we thought it would be helpful to have participants explain why they answered the dependent variables as they did, so we included a question asking them to explain their reasoning. This question came after the two dependent variables and the attention check, such that it would not affect the results of the study in any way.

We thought this question could provide more insight into participants’ thinking process. However, we stated in our preregistration that participants’ responses to this question would not impact the replicability score the paper received.

Additional details about the results

Differences in distributions of participant responses

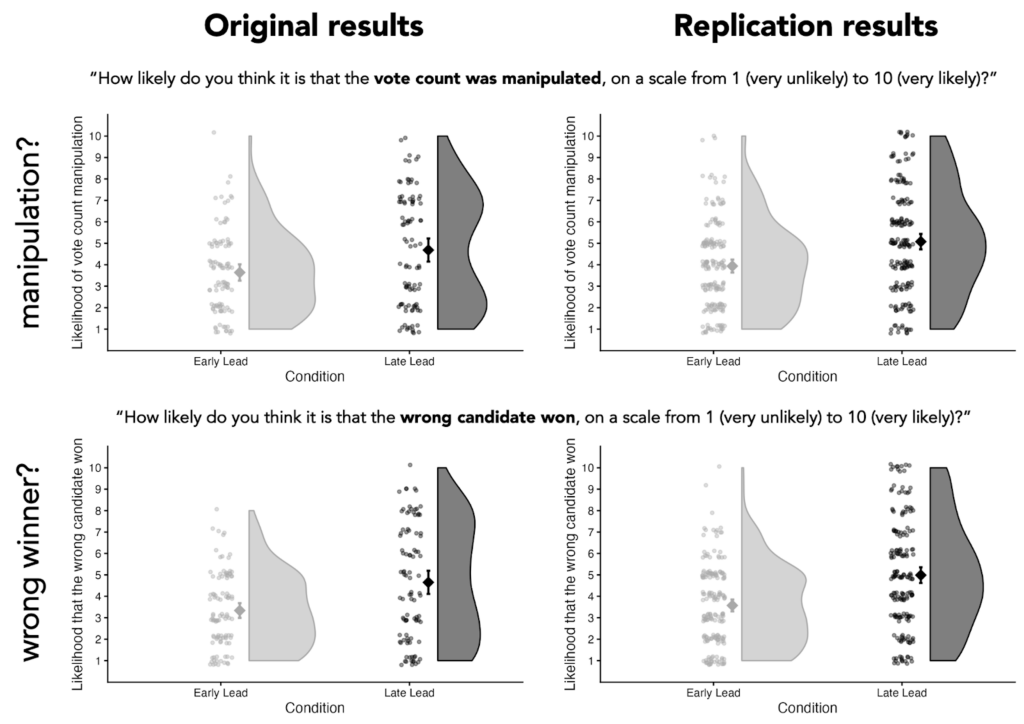

Even though the results from the statistical tests run on the original data and our replication data returned very similar results (see Table 1), some of the distributions of participants’ responses looked different between the two datasets.

As you can see in Figure 3, copied below, the distribution of participants’ responses in the Early Lead Condition look pretty similar between the original data and the replication data. However, the distributions of responses in the Late Lead Condition differ. In the original data, participants’ responses in the Late Lead Condition appear bimodal, such that there are two common responses (values in the lower-middle part of the scale, and values in the upper-middle part of the scale). In the replication data, participants’ responses appear unimodal, such that there is a single most common response (values in the middle of the scale).

It is not clear what to attribute this difference to, but future research should focus on this discrepancy because different distributions of responses tell different stories.

The original data seem to suggest that, in the Late Lead Condition, a sizable group of people think it’s likely that election fraud occurred and another sizable group of people think it’s unlikely that election fraud occurred. These data could mean that there’s a sizable group of people that are quite sensitive to whether a candidate takes a late lead, and there’s another sizable group that are insensitive to late-lead situations.

On the other hand, our replication data seems to suggest that, in the Late Lead Condition, the most common response is that people are unsure whether election fraud occurred. These data could mean that most people are slightly sensitive to whether a candidate takes a late lead.

However, because this study used a between-subjects design, it is difficult to precisely assess how many people were affected by the experimental manipulation and in what way they were affected. A within-subjects design would be needed to assess how each individual participant’s ratings differed in the Late Lead Condition compared to the Early Lead Condition. This would allow us to more accurately estimate what percentage of people are likely to be unaffected, mildly affected, and strongly affected by a candidate taking a lead late rather than an early lead.

Future work that employs a within-subjects design could provide more information about individuals’ sensitivity to late-lead dynamics, which could help contextualize the distributions observed in the Late Lead Condition.

Results from open-ended question

As described earlier, we asked participants to explain why they answered the two key questions as they did. Here’s the exact question we asked them:

Here’s how you answered two of the questions you were asked earlier:

Question: “How likely do you think it is that the vote count was manipulated, on a scale from 1 (very unlikely) to 10 (very likely)?”

Your response: {this displayed the participant’s response}

Question: “How likely do you think it is that the wrong candidate won, on a scale from 1 (very unlikely) to 10 (very likely)?”

Your response: {this displayed the participant’s response}

Can you briefly explain your reasoning?

We included this open-ended question so that we could assess whether the rationales participants provided were in line with the hypotheses put forth by the original paper. We preregistered that, since this question was not included in the original study, we would not factor participants’ responses to this question into our replicability rating.

To get a high-level sense of how participants answered this question, we ran their responses through a large language model (LLM)—specifically, ChatGPT-5.2-thinking.

It’s important to say upfront that the results from the LLM we present below should not be interpreted as rigorous, well-vetted findings. Rather, it’s akin to having a human read through all of the responses and providing their own idiosyncratic grouping of people’s explanations. That said, we still think it’s a helpful way to get an approximation of what participants were thinking. Anyone interested in doing more formal analyses on this free response data is welcome (and encouraged!) to do so. This data is publicly available here. Additionally, we’ve included the exact prompts we used and links to the full responses from the model in the Appendix (see the section titled “Additional Information about the Results”).

We asked the LLM to summarize the different types of explanations participants provided. We fed the model the responses from participants in the Early Lead Condition and the Late Lead Condition separately. In other words, we gave the model the same prompt twice and in one instance it had access to the Early Lead Condition data and in the other instance it had access to the Late Lead Condition data.

Here’s how the model summarized the responses from participants in the Early Lead Condition:

| ChatGPT’s breakdown of typical responses in the Early Lead Condition |

|---|

| 1) “It was very close, so anything could have tipped it” Many explanations anchored on the small final margin: a close race was seen as inherently more vulnerable to manipulation, counting error, or late-breaking irregularities (often paired with “should be investigated/recounted”). Approx. prevalence: ~37% referenced closeness explicitly. |

| 2) “The winner led the whole time / the trajectory looked normal” A common reasoning pattern was: the vote totals progressed consistently and the eventual winner was ahead throughout (or at least not showing a shocking reversal), which participants treated as evidence that the count was probably legitimate. Approx. prevalence: ~28% referenced a steady lead / consistent progression. |

| 3) Baseline trust in electoral administration Some participants relied primarily on a prior belief that elections are generally fair (or that large-scale rigging is unlikely / would be detected), and therefore rated manipulation and “wrong winner” as unlikely absent concrete evidence. Approx. prevalence: ~28%. |

| 4) Baseline distrust / “elections (especially here) are often rigged” Another major cluster leaned on a general cynicism about elections (sometimes explicitly tied to the setting—e.g., “Eastern Europe,” corruption, “politicians cheat,” etc.). These explanations treated the rumor as plausible because it fits a broader worldview. Approx. prevalence: ~26%. |

| 5) “The pattern looked suspicious” (gap-closing, odd shifts, “fishy” dynamics) A smaller set pointed to features of the time series—e.g., one candidate steadily closing the gap, a late surge, or otherwise “weird/odd” dynamics—as suggestive of manipulation (even if they acknowledged alternative explanations). Approx. prevalence: ~11%. |

| 6) “Reporting order / geography can explain shifts” Some participants explicitly invoked benign process explanations, such as different regions (urban vs rural, city vs country) reporting at different times, which could naturally produce changing margins as additional votes come in. Approx. prevalence: ~7%. |

| 7) “Rumor isn’t evidence / not enough information to know” A distinct explanation style emphasized epistemics: participants said the prompt provides no proof, so they cannot confidently infer manipulation or a wrong winner; they often landed on mid-scale ratings (“could go either way”) or low ratings due to lack of evidence. Approx. prevalence: ~8–10%. |

| 8) Separation of the two judgments: manipulation vs wrong winner A smaller subset explicitly distinguished: some manipulation could be possible without it being enough to flip the outcome (or conversely, the outcome could be wrong even if manipulation is uncertain). Approx. prevalence: ~3% were very explicit about this distinction. |

| 9) “If it were rigged, they wouldn’t make it that close” A few participants used a strategic argument: if an actor rigged the election, they would likely engineer a clearer margin; the fact that the result was tight was interpreted as evidence against manipulation. Approx. prevalence: ~3%. |

Here’s how the model summarized the responses from participants in the Late Lead Condition:

| ChatGPT’s breakdown of typical responses in the Late Lead Condition |

|---|

| 1. Suspicious Late Shift / “Come-from-Behind” Narrative Many participants focused on the temporal pattern of the vote count. Explanations in this category emphasize that one candidate led for most of the count, only to lose near the end. Typical reasoning: * A large or sudden swing in the final timepoints “felt suspicious.” * The winner’s late surge was described as “illogical,” “unlikely,” or “not natural.” * The timing of the shift (e.g., in the last 10–20% of votes) was taken as prima facie evidence of manipulation. This was the most common explanation among participants who rated manipulation or a wrong winner as likely. |

| 2. Stability Expectations / Linear Trend Heuristics Closely related, but conceptually distinct, some participants argued that vote shares should remain relatively stable as more votes come in. Typical reasoning: * Percentages “shouldn’t change that much” once a large portion of votes is counted. * Large deviations late in the count violate expectations of smooth or linear accumulation. * Participants implicitly assumed early trends are representative of the final outcome. This reflects a heuristic expectation of convergence that does not account for heterogeneous vote sources. |

| 3. Benign Explanations: Geographic or Demographic Differences Many participants explicitly rejected fraud explanations by invoking real-world electoral processes. Typical reasoning: * Different regions or population centers report results at different times. * Urban vs. rural areas, or regions favoring different candidates, may be counted later. * A late swing is therefore plausible without manipulation. These explanations often referenced: * Population density * Regional political preferences * Order of precinct reporting This category was especially common among participants who judged manipulation as unlikely. |

| 4. Close Race / Margin-Based Reasoning Some participants focused primarily on how close the election was overall. Typical reasoning: * Because the margin was small, late changes could realistically flip the outcome. * Close races are inherently volatile, so reversals are not surprising. * Conversely, some argued that a close margin makes manipulation easier and therefore more plausible. Thus, closeness was used both to argue for and against fraud, depending on the participant. |

| 5. Lack of Evidence / Epistemic Caution A substantial number of responses emphasized uncertainty and insufficient information. Typical reasoning: * “There’s no proof either way.” * Rumors alone are not strong evidence. * Without concrete data or corroboration, strong conclusions are unwarranted. These participants often gave moderate likelihood ratings and explicitly acknowledged ambiguity. |

| 6. Trust in Institutions or Electoral Norms Some explanations relied on generalized trust assumptions. Typical reasoning: * Election authorities denied wrongdoing, which carries weight. * Large-scale manipulation would be difficult to hide. * Elections are usually fair, even if imperfect. This reasoning often appeared in combination with skepticism toward rumor-based allegations. |

| 7. Contextual or Cross-National Analogies A smaller subset of participants referenced broader political contexts. Typical reasoning: * Comparisons to elections in other countries (e.g., “this wouldn’t happen where I live”). * Assumptions about corruption levels in Eastern Europe (sometimes explicit, sometimes implied). * General beliefs about how “rigged elections” usually look. These explanations relied more on background beliefs than on the specific vote trajectory shown. |

These summaries raise a few interesting points.

First, some of the common explanations in both conditions support the hypothesis from the original paper. According to the LLM, a common explanation in the Late Lead Condition was that it was suspicious that the winner only gained the lead towards the end of the vote count reporting cycle. Moreover, a common explanation in the Early Lead Condition was that it was unlikely that manipulation occurred given that the eventual winner was in the lead throughout the vote count reporting. Both of these explanations are consistent with the original paper’s cumulative-redundancy-bias account for why people would think manipulation is more likely in the Late Lead Condition.

Second, some participants seemed savvy to more benign reasons a candidate might gain a late lead in the vote count reporting cycle. Although these explanations were less common than those discussed in the paragraph above, some participants noted that the order in which geographic areas report vote counts can cause late-lead dynamics.

Finally, a lot of participants seemed to justify their responses by simply appealing to their prior beliefs about how common or uncommon election manipulation is.

Overall, the fact that some of the most common explanations participants provided were directly aligned with the authors’ cumulative-redundancy-bias account corroborates the authors’ hypothesis, especially for the subset of participants who provided that explanation explicitly.

It is also possible that the original paper’s account accurately explains the judgments of participants who provided explanations that do not align with the Cumulative Redundancy Bias. After all, it’s possible that some participants were influenced by the Cumulative Redundancy Bias, but didn’t realize it. Interestingly, in Study 6 of the paper (which we did not attempt to replicate), participants were provided a benign explanation for swings in the vote count totals—that votes were counted first in the rural areas where the losing candidate was more popular. The study found that, even with this explanation in hand, participants in the Late Lead Condition still thought election manipulation was more likely.

As such, it’s difficult to estimate from these explanations alone how many people’s judgments were influenced by the Cumulative Redundancy Bias. Even with this explanation data, we don’t know whether the observed differences between conditions were driven by a subset of participants responding strongly, or by a large proportion of participants responding mildly. As mentioned in the previous subsection, a within-subjects design would provide more insight into how many participants showed the hypothesized effect and to what degree. Coupled with participants’ explanations for their ratings, a within-subjects design would allow researchers to examine questions like: how many participants who were sensitive to the winning candidate taking a late lead provided an explanation consistent with this experimental manipulation? Ultimately, the fact that so many participants conjured explanations that directly align with the Cumulative Redundancy Bias supports the main hypothesis in the paper.

Interpreting the Results

All of the results from the original study replicated when analyzed on the data we collected. In addition to finding the same general patterns, the mean values and differences between conditions we observed were very similar to the original study (see Figure 3).

When assessing this study in isolation, one might wonder if the observed effects are really attributable to whether the candidate gained a late lead versus an early lead. After all, if you inspect the sequence of partial vote counts participants saw in both conditions (see Figure 2), there were other differences beyond the early-lead/late-lead factor. For example, in the Late Lead condition, the race appears fairly close at every timepoint, whereas, in the Early Lead condition, the race only becomes close towards the end. This represents a difference between the conditions that was not experimentally controlled for. From this study alone, we can’t know whether such differences mattered. However, the original paper has a total of seven studies, many of which rule out alternative explanations. For example, Study 5 uses almost exactly the same sequence of partial vote counts in both conditions, and still finds a similar effect to that observed in Study 4. We recommend reviewing the other studies in the original paper for those interested in alternative explanations for these results.

Another thing to note is that because this study used a between-subjects design, we are not able to directly assess what percentage of the participants were influenced by the order in which partial vote counts were reported. For example, it could be the case that the differences in average ratings between the conditions were due to a small number of participants being strongly affected by the partial vote count order or due to a large number of participants being mildly affected by the partial vote count order. This could be useful for future research to untangle.

Finally, it is worth mentioning that Psychological Science, the academic journal that published the original paper, has recently instituted a series of transparency requirements. They now require authors to publicly share data, study materials, analysis code, and deviations from preregistrations. They also ensure that the results in a paper are computationally reproducible and emphasize the importance of not overclaiming. These are not simply stated policies of the journal, but are elements actively assessed by specific editors at the journal whose role is to evaluate the transparency of submitted articles. (You can read about these policies in more detail, and the rationale behind them, in this editorial.)

This was the first Psychological Science paper we replicated that was published after these policies were implemented. Many of the issues we’ve run into when replicating previous studies—e.g., overclaiming, coding errors, deviations from preregistrations that went unacknowledged—were not present in this paper. We cannot, of course, attribute the quality of this paper to the new policies at Psychological Science since the authors might have taken the same actions regardless of the journal’s policies. Nevertheless, Psychological Science’s policies seem likely to substantially improve the transparency and clarity of articles published in this journal.1

1It is important to acknowledge that I (the author of this report) volunteer as a reproducibility checker for Psychological Science, which could be biasing my view on the positive potential of these policies instituted at Psychological Science.

Conclusion

Overall, we successfully replicated the two primary findings from the original study. Both the original study and our replication found that participants were more likely to think election fraud had occurred and that the wrong candidate had won when the winning candidate took a late lead, rather than an early lead.

The study received a transparency rating of 5 stars, a replicability rating of 5 stars, and a clarity rating of 5 stars.

It is important to note that the study we replicated was one of seven studies reported in the original paper. As such, our replication only directly assesses a small proportion of the findings reported in the paper.

Acknowledgements

We want to thank the authors of the original paper for making their data and materials publicly available, and for their quick and helpful correspondence throughout the replication process. Any errors or issues that may remain in this replication effort are the responsibility of the Transparent Replications team.

We also owe a big thank you to our 364 research participants who made this study possible.

Finally, we are extremely grateful to the rest of the Transparent Replications team for their advice and guidance throughout the project.

Purpose of Transparent Replications by Clearer Thinking

Transparent Replications conducts replications and evaluates the transparency of randomly-selected, recently-published psychology papers in prestigious journals, with the overall aim of rewarding best practices and shifting incentives in social science toward more replicable research.

We welcome reader feedback on this report, and input on this project overall.

Appendices

Additional Information about the Methods

The table below displays the full set of partial vote counts participants witnessed, depending on the experimental condition they were assigned to.

The “% of votes reported” column displays the percentage of votes that participants learned had been counted at that point.

The “Vote count” column displays the running total of the votes each candidate had received.

In this table, we have denoted the candidates as “Winner” or “Loser” which refers to whether the candidate ended up winning or losing once the final votes were in (participants did not see these terms; instead they saw the names of the candidates).

See Figure 1 in the Summary of the methods section to see how these numbers were presented to participants at each timepoint.

| Timepoint | Late Lead Condition | Early Lead Condition | ||

| % of votes reported | Vote count | % of votes reported | Vote count | |

| 1 | 11.37% | Winner: 47.92% Loser: 52.08% | 6.54% | Winner: 70.13% Loser: 29.87% |

| 2 | 25.22% | Winner: 42.61% Loser: 57.39% | 18.65% | Winner: 68.40% Loser: 31.60% |

| 3 | 31.76% | Winner: 42.46% Loser: 57.54% | 29.45% | Winner: 62.10% Loser: 37.90% |

| 4 | 44.71% | Winner: 41.94% Loser: 58.06% | 39.28% | Winner: 59.95% Loser: 40.05% |

| 5 | 50.10% | Winner: 42.58% Loser: 57.42% | 49.90% | Winner: 57.69% Loser: 42.31% |

| 6 | 60.72% | Winner: 43.76% Loser: 56.24% | 55.29% | Winner: 56.74% Loser: 43.26% |

| 7 | 70.55% | Winner: 45.12% Loser: 54.88% | 68.24% | Winner: 53.68% Loser: 46.32% |

| 8 | 81.35% | Winner: 45.93% Loser: 54.07% | 74.78% | Winner: 52.65% Loser: 47.35% |

| 9 | 93.46% | Winner: 48.72% Loser: 51.28% | 88.63% | Winner: 50.40% Loser: 49.60% |

| 10 | 100.00% | Winner: 50.12% Loser: 49.88% | 100.00% | Winner: 50.12% Loser: 49.88% |

Additional Information about the Results

Below is the prompt we fed to ChatGPT-5.2-thinking (on December 22, 2025) in order to have it summarize the explanations participants provided in the study. As described in the section titled “Results from open-ended question,” we fed the model the responses from participants in the Early Lead Condition and the Late Lead Condition separately.

I have attached data from a study about election fraud. In this study, participants saw the progression of partial vote counts of an election in Eastern Europe. Participants saw the accumulated vote counts for two different candidates across 10 timepoints, where each timepoint corresponded to roughly 10% more of the vote coming in. The final timepoint showed the official, final vote count.

After participants saw the vote counts at all 10 timepoints, they were told:

“Shortly after the vote count was finished, rumours emerged that the vote count may have been rigged and that the wrong candidate won as a result. The people responsible for the vote count, however, denied the allegation.”

Participants then answered the two primary questions of interest:

“How likely do you think it is that the vote count was manipulated, on a scale from 1 (very unlikely) to 10 (very likely)?” (in this dataset, this is the column “likely_manipulated”)

“How likely do you think it is that the wrong candidate won, on a scale from 1 (very unlikely) to 10 (very likely)?” (in this dataset, this is the column “likely_wrong_candida”)

Participants were then asked to explain their reasoning for their responses to these questions (in this dataset, these explanations are shown in the column “explanation”).

Can you please summarize the different types of explanations participants provided?

You can view the full response from ChatGPT-5.2-thinking for the Late Lead Condition here (https://chatgpt.com/share/694981bd-8460-8006-ad49-a9030c424918) and the Early Lead Condition here (https://chatgpt.com/share/69504a28-95cc-8006-a90a-8c314ddd4b53).

References

Faul, F., Erdfelder, E., Buchner, A., & Lang, A.G. (2009). Statistical power analyses using G*Power 3.1: Tests for correlation and regression analyses. Behavior Research Methods, 41, 1149-1160. https://doi.org/10.3758/BRM.41.4.1149

Vaz, A., Ingendahl, M., Mata, A., & Alves, H. (2025). “Stop the Count!”—How Reporting Partial Election Results Fuels Beliefs in Election Fraud. Psychological Science, 36(8), 676-688. https://doi.org/10.1177/09567976251355594